From Volume to Value: Building a Sustainable AI Inference Platform

Timon Agar

Engineering and product team.

BUSINESS UPDATE · MARCH 20, 2026

Decreased Volume, Increased Efficiency

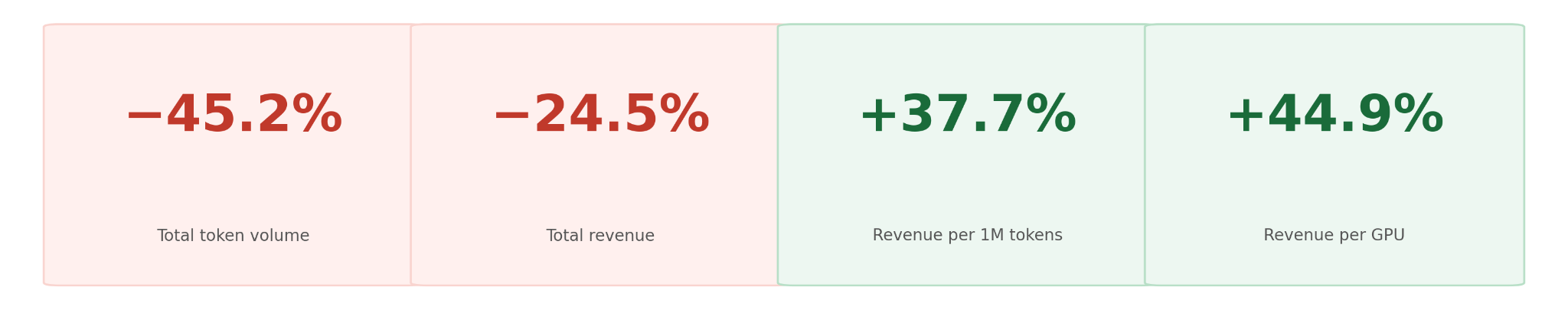

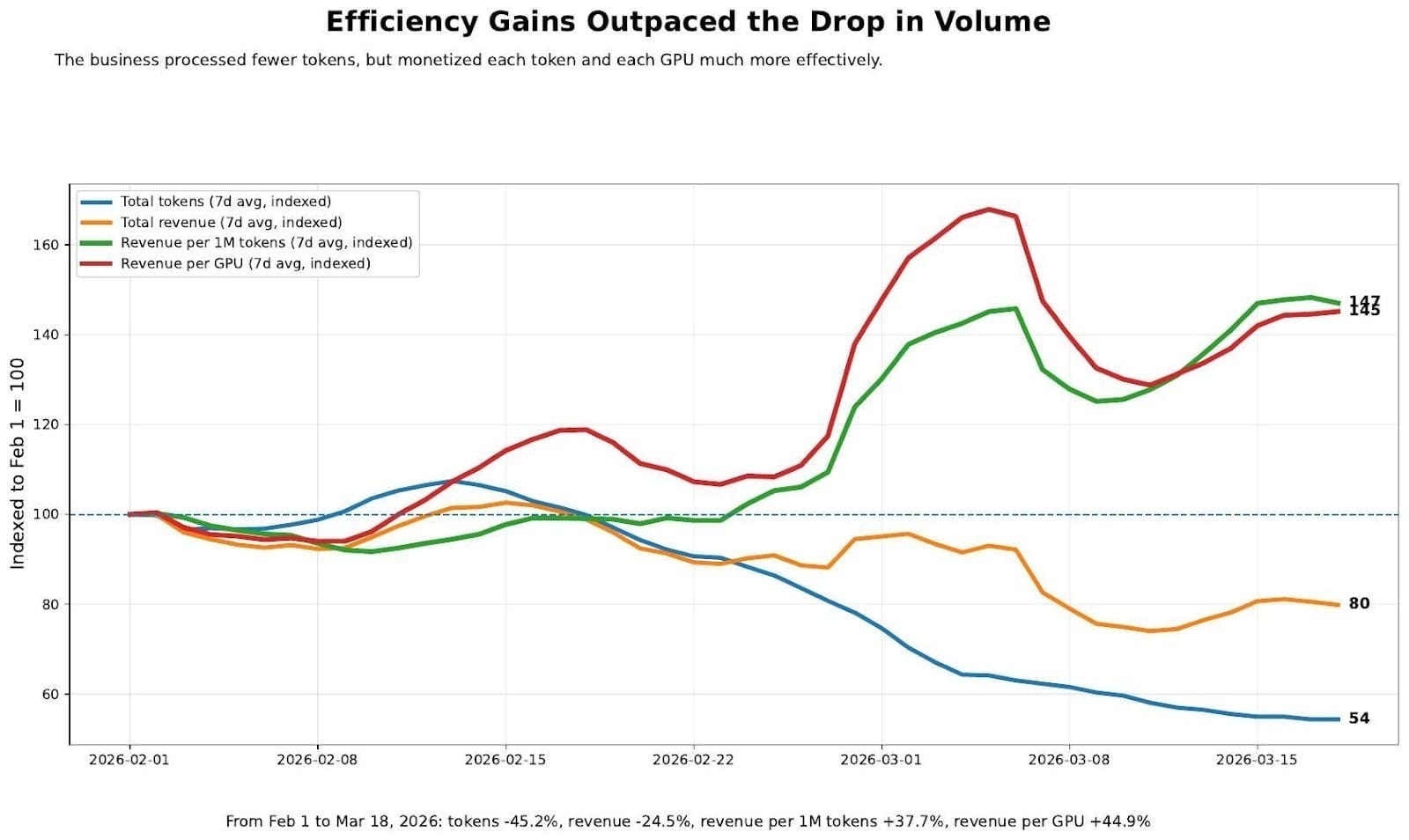

We processed 45% fewer tokens over the last six weeks. On the surface, that may seem like pretty bad news, but the true story is one of massive increases in efficiency and future sustainability.

Tokens are down nearly half. Revenue is down a quarter. Sounds like a rough six weeks. But look at what those two numbers together tell you: every token we serve is now worth significantly more than it was in February, and every GPU in our fleet is pulling harder than ever.

This didn't happen by accident. We made deliberate decisions to cut volume that was costing us far more than it was earning — and the efficiency gains have outpaced the headline declines by a wide margin.

We Refocused and Refined our Approach to Business.

Not all token usage is created equal. Over the past several months, we were serving enormous volumes that looked impressive on paper but were actively working against us financially. Here's what we removed:

- ~20B tokens/day from a sponsored free tier via OpenRouter (TNG Tech). This program ended on their timeline — it was always a limited engagement.

- ~10B tokens/day from a 200 requests/day free quota offered to ~11,000 users. Average cost: ~$6/user/month. Revenue: zero.

- ~10B tokens/day from a privately hosted model running at a 9:1 cost-to-revenue ratio — completely underwater.

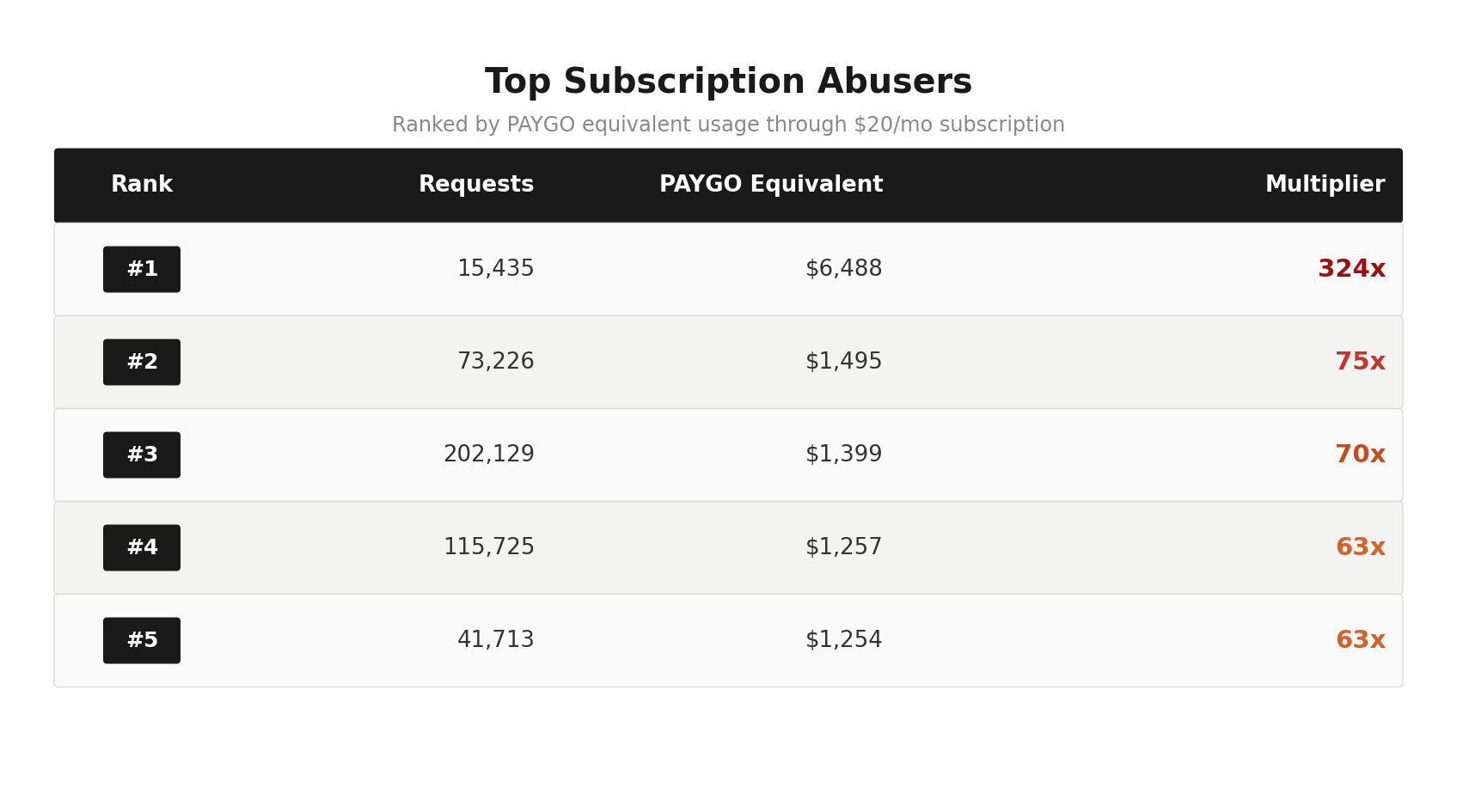

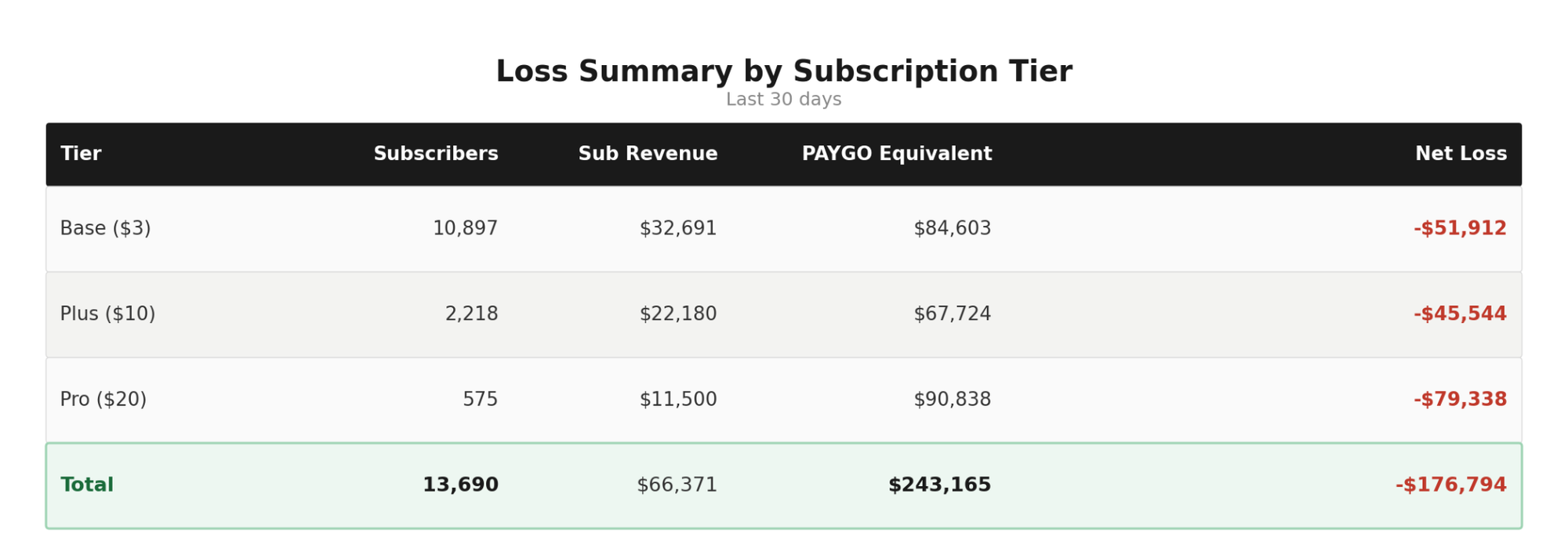

- Billions more from subscription abuse and misuse, including 3–4 companies routing pay-as-you-go usage through our $20/month subscription tier. These accounts were generating up to $6,400 in actual usage per account.

- Adding up to hundreds of thousands in monthly loss across all subscription tiers.

This was a problem we couldn't ignore, and we acted decisively. Changes to our subscription model closed the door on that kind of abuse — while preserving what subscriptions are meant to be: a viable, affordable option for everyday users. High-volume customers are now routed to pay-as-you-go, ensuring the economics are fair for everyone.

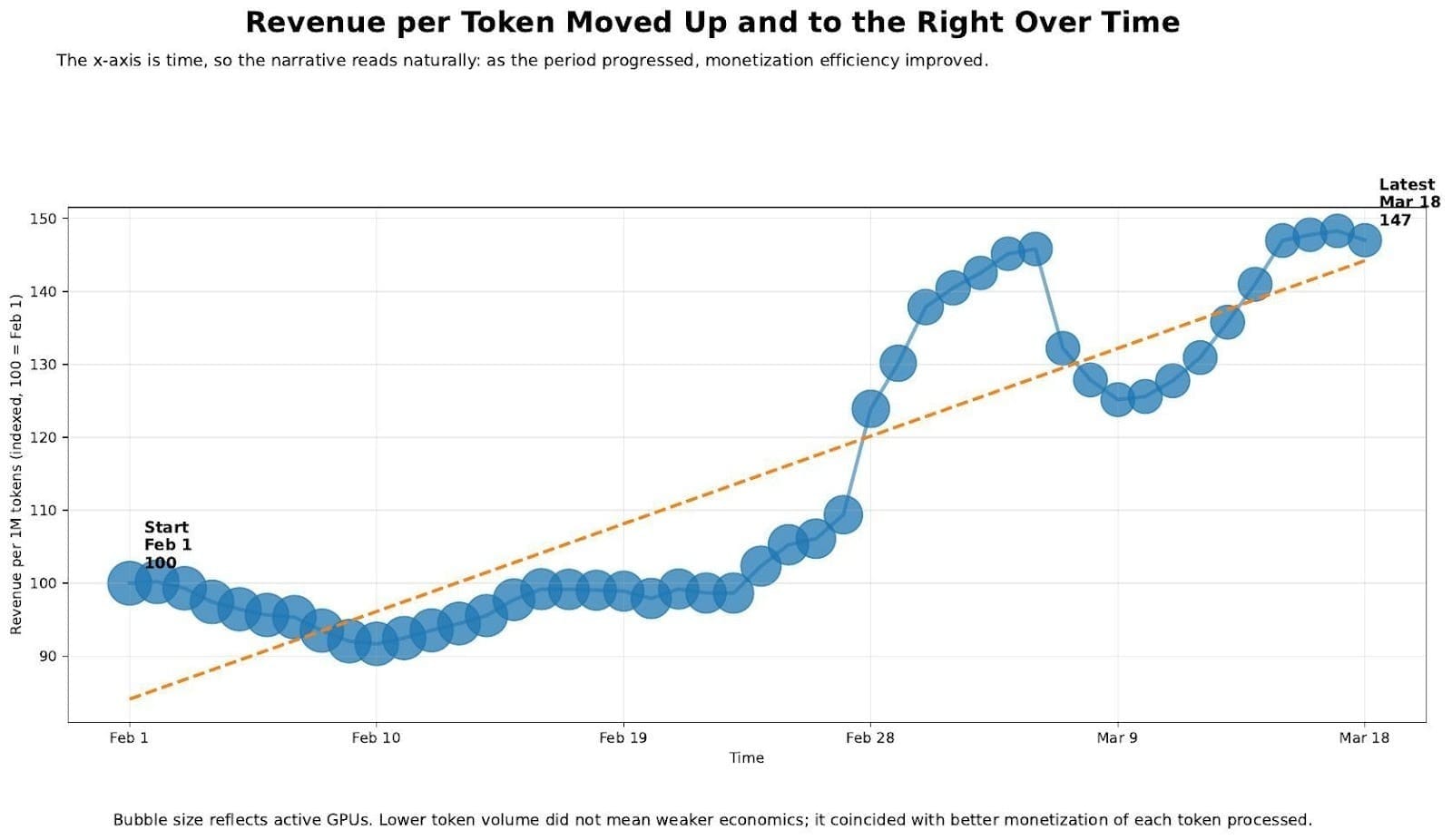

Each Token Served Is Earning More Revenue

Following these changes revenue per million tokens has climbed 37.7% since February 1. When we stopped subsidizing unprofitable usage, the economics of every remaining token improved dramatically. The trend has been consistently upward, with the sharpest inflection at the start of March when the last major subsidy programs wound down.

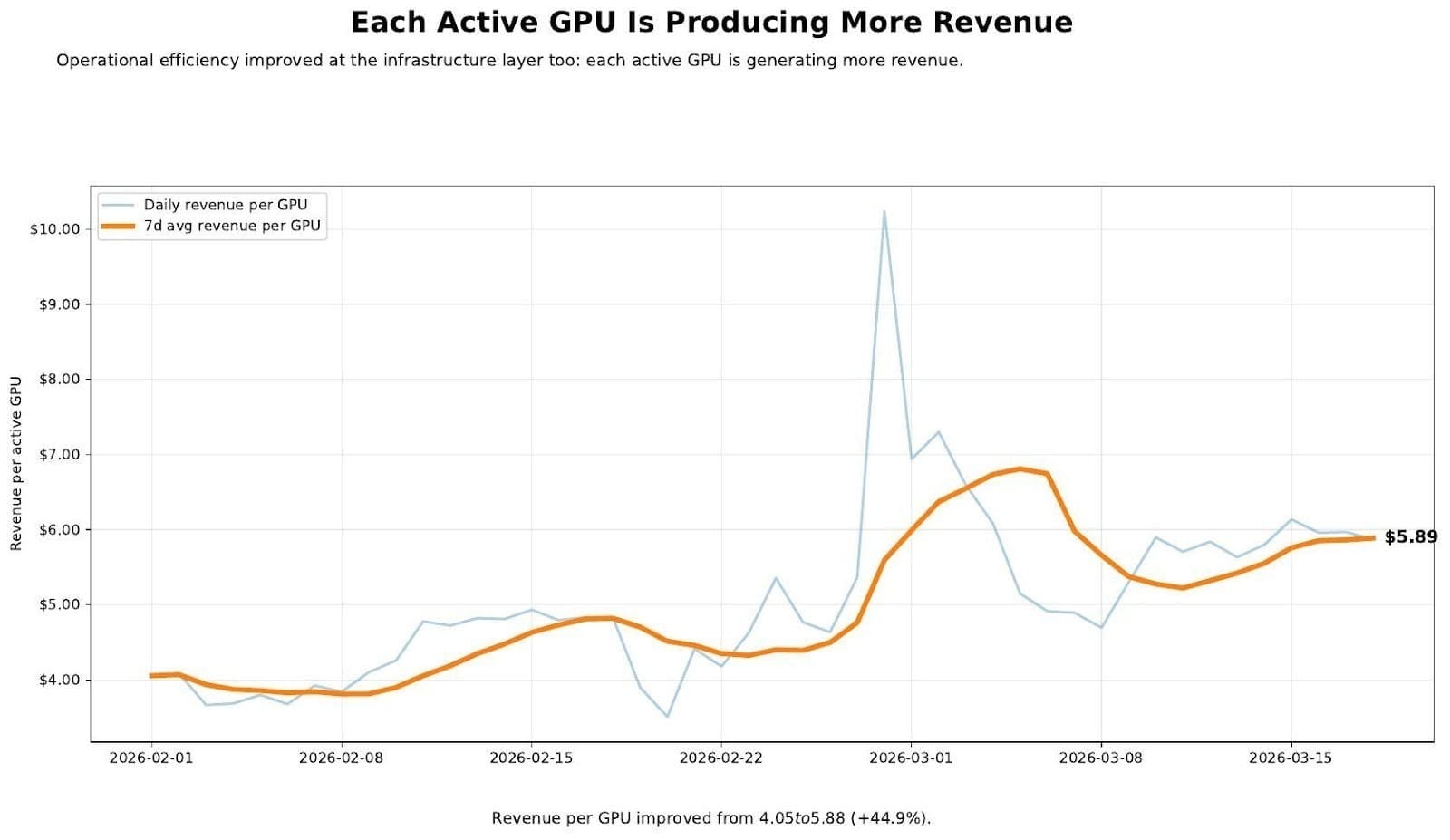

Each GPU Is Pulling Its Weight

An additional factor that we had to consider is hardware availability. The AI industry is currently grappling with a global GPU shortage that has made hardware increasingly scarce and expensive. As providers compete for limited resources, optimizing the infrastructure we already have has never been more critical — and that's exactly what we've done. Driven both by necessity and a genuine commitment to improving the platform, we've pushed our available hardware to new levels of efficiency.

Revenue per GPU improved from $4.05 to $5.89 — a 44.9% gain — over the same period. As we wound down unprofitable workloads, we also reduced our active H200 count, so infrastructure costs fell alongside volume. The fleet is now smaller and significantly more productive per machine.

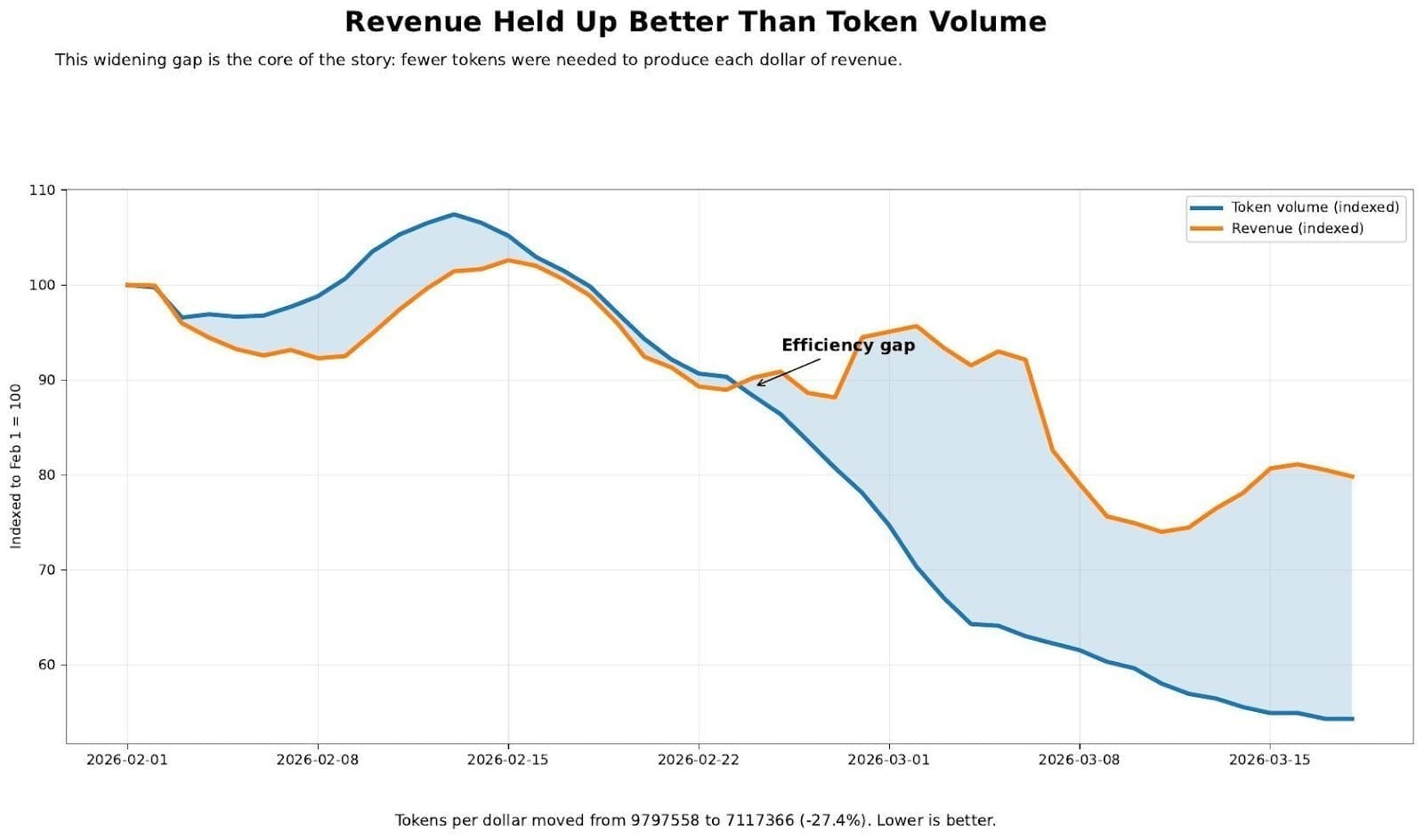

Revenue Quality Is Improving

Revenue held up far better than token volume — and that widening gap is exactly what we were aiming for. Tokens per dollar fell 27.4%, from ~9.8M to ~7.1M. Lower is better: each dollar of revenue now requires fewer tokens to produce, and that ratio keeps improving.

Where We Stand Today

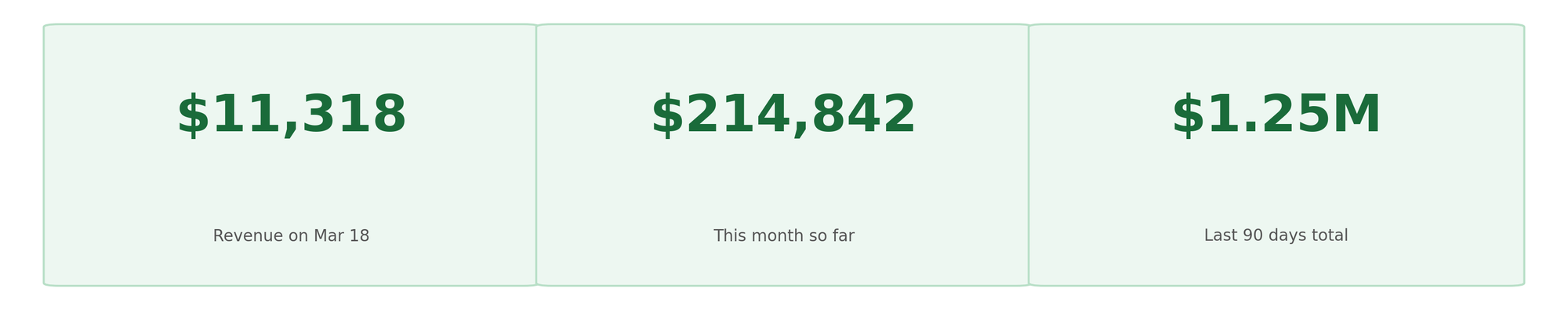

Our public revenue dashboard tells the current story in real time:

Organic pay-as-you-go revenue from our hosted public LLM inference grew 20% over the final 10 days of the measured period — driven by a wide, diverse base of customers, with no single deal or account skewing the results. This is broad-based, compounding growth: exactly the kind that lasts.

What This Means Going Forward

We're paying less per token and less per dollar of revenue than at any point in this company's history. Infrastructure costs are down alongside volume. And we now have a clear, unambiguous signal to optimize toward: sustainable paygo growth from a diverse customer base.

No subsidies. No underwater deals. No abuse vectors. Just real customers paying for real usage, and a fleet that earns its keep.

That's the flywheel. It's already turning.

Related Articles

Community Announcement - February 27th, 2026

Feb 27, 2026

New Chutes Landing Pages, Miner Dashboards & Pricing Updates

Jul 31, 2025